29 November 2011

On seismic signals from landslides – new research

Posted by Dave Petley

![]() Summary: new research from ETH Zurich on using seismic signal to detect large landslide events

Summary: new research from ETH Zurich on using seismic signal to detect large landslide events

Regular readers will be aware that in my view one of the most fascinating areas of landslide research at the moment is that of the seismic signals that they generate. Large, fast landslides, especially those formed from rock, are sufficiently energetic that they generate earthquake waves that can be recorded remotely. This provides an opportunity to detect large events as they occur and, potentially, to locate them. In addition, processing of the signal allows the speed of movement, and possibly other parameters to be determined. There are many applications of this, ranging from the academic (can we evaluate how many large landslides occur in an average year, and when and where do they happen?) to the practical (a well-developed system might give warning that a valley blocking landslide has occurred).

Of course all of this is in principle – in other words we think that landslide-induced seismicity has this potential, but the reality of making it work is yet to be completed. And hence the need for research.

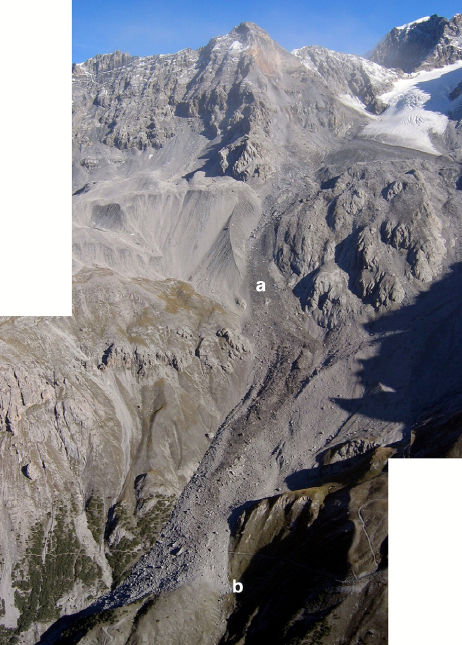

Over at ETH Zurich, Jeff Moore and his colleagues have been working on this issue. Last week a paper (Dammeier et al. 2011) was posted online at the AGU journal “Journal of Gephysical Research – Earth Surface” that describes an investigation of these seismic signals, focusing on whether properties of a landslide, such as volume, can be derived from the seismic signals recorded remotely. To do this, 20 known rockslides from the Alps were compiled (including the 2004 Thurweiser rock avalanche shown below), and the data recorded in the regional seismic network analysed for each event.

The paper is interesting in a number of ways. First, it was clear that these events are indeed detectable and that they tend to have a characteristic spectrogram form that allows them to be differentiated from other events that generate seismic signals. The researchers also found that they could locate the events using the automated algorithms usually applied to earthquakes, with an average error of about 11 km. Of course, a circle of 11 km encloses a great deal of terrain, but nonetheless this at least gives a very encouraging starting point for finding the event. Hopefully with time this will be improved as better techniques are developed. Perhaps most usefully, the team found that simple metrics such as signal duration, peak value of the ground velocity envelope and velocity envelope area were correlated with landslide parameters such as volume, runout, drop height, and potential energy.

The research team then tested the resulting algorithm on three new landslide events, and succeeded in estimating key parameters to within an order of magnitude. There is at least one other interesting aspect. This is that the study starts to indicate a maximum detection distance for events if different sizes – so, for example, a one million cubic metre event can be detected for a little over 100 km. This suggests that to do this properly a quite dense seismic network is needed.

This study is a substantial step forward in this field, providing the quantitative reference algorithms that allow these analyses to be undertaken. It may well be that the relationships will need to be modified for other topographies, lithologies and land systems, so more work is needed in other mountain chains. However, the research validates the general approach, and as such is an important landmark. Hopefully it will stimulate more work in this important area. I hope that we’ll see more of this work by a variety of groups presented next week at the AGU Fall meeting in San Francisco.

Reference

Dammeier, F., Moore, J., Haslinger, F., & Loew, S. (2011). Characterization of alpine rockslides using statistical analysis of seismic signals Journal of Geophysical Research, 116 (F4) DOI: 10.1029/2011JF002037

Dave Petley is the Vice-Chancellor of the University of Hull in the United Kingdom. His blog provides commentary and analysis of landslide events occurring worldwide, including the landslides themselves, latest research, and conferences and meetings.

Dave Petley is the Vice-Chancellor of the University of Hull in the United Kingdom. His blog provides commentary and analysis of landslide events occurring worldwide, including the landslides themselves, latest research, and conferences and meetings.

[…] year I blogged on some fascinating research from Switzerland that had examined the potential for locating large landslides using seismic datasets. The […]