21 January 2024

Swan song

Trumpeter Swan observed last week at Ragged Mountain Reservoir, near Charlottesville, Virginia

Well, this is it:

The last post at Mountain Beltway here at the AGU Blogosphere.

AGU has been so accommodating, hosting my blog for these past 13+ years, but they’ve let me know that it’s time to “sunset” the AGU Blogosphere. This didn’t come as a shock – the pace of posting around here has slowed way down over the past several years. I figured at some point, someone would pull the plug.

I will migrate all the content here to another site, and I may choose to continue blogging there, but at the moment my three-years-plus of low blogging inspiration feels like it’s going to continue. I’ve found more inspiration to write when I’m adding content to my free, online Historical Geology textbook.

I’ve also been motivated to make video lately, and have produced a lot of YouTube videos over the past year. I’d hope to continue doing that.

And I’ve found a new social media home – Bluesky, a very oldschool-Twitter-feeling service, with none of the odious baggage that comes with the actual Xitter. (Quitting that when I did feels very prescient; I’m proud of that move.) Bluesky feels like the premuskian days at Twitter, complete with a lot of familiar “geotweeps” — I hope more geoscientists find their way there.

I’d like to thank María-José Viñas for recruiting me to be a founding blogger at the AGU Blogosphere, and grateful to all the technical support AGU staff has extended my way through the years, in particular Larry O’Hanlon, Mike McFadden, and Anthony B.

So long, compadres. Happy trails!

1 January 2024

Yard list 2023

Another year has elapsed at the usual rate, and now it draws to a close. Time for me to tally up the year’s sightings. My goals for the year were to be in the Top Ten eBird users in my county, and to attempt to take a good photo of each species. I ended up at #6, and I’m happy with the photos I got (a selection of you will see above).

I had 115 species in my yard this year, one more than last year:

- Canada Goose

- Turkey Vulture

- Red-shouldered Hawk

- Red-tailed Hawk

- Yellow-bellied Sapsucker

- Red-bellied Woodpecker

- Downy Woodpecker

- Northern Flicker

- Blue Jay

- American Crow

- Carolina Chickadee

- Tufted Titmouse

- Ruby-crowned Kinglet

- Golden-crowned Kinglet

- White-breasted Nuthatch

- Red-breasted Nuthatch

- Carolina Wren

- European Starling

- Eastern Bluebird

- Hermit Thrush

- American Robin

- House Finch

- Purple Finch

- American Goldfinch

- Dark-eyed Junco

- White-throated Sparrow

- Northern Cardinal

- Mourning Dove

- Northern Mockingbird

- Pileated Woodpecker

- Chipping Sparrow

- Song Sparrow

- Yellow-rumped Warbler

- Great Blue Heron

- Hairy Woodpecker

- Common Raven

- Brown Creeper

- Cedar Waxwing

- Field Sparrow

- Black Vulture

- Northern Harrier

- Sharp-shinned Hawk

- Cooper’s Hawk

- Merlin

- Eastern Towhee

- Bald Eagle

- American Kestrel

- Eastern Phoebe

- Eastern Meadowlark

- Wood Duck

- Hooded Merganser

- Wilson’s Snipe

- Belted Kingfisher

- Red-winged Blackbird

- Fish Crow

- Brown-headed Cowbird

- Common Grackle

- Pine Warbler

- Rock Pigeon

- Osprey

- Sandhill Crane

- Brown Thrasher

- Louisiana Waterthrush

- Wild Turkey

- Ruby-throated Hummingbird

- Barn Swallow

- Blue-gray Gnatcatcher

- Broad-winged Hawk

- Tree Swallow

- Killdeer

- Grasshopper Sparrow

- Wood Thrush

- Green Heron

- Northern Rough-winged Swallow

- Yellow-throated Vireo

- Orchard Oriole

- Palm Warbler

- Red-headed Woodpecker

- Great Crested Flycatcher

- Red-eyed Vireo

- Gray Catbird

- Indigo Bunting

- Chimney Swift

- Eastern Kingbird

- Prairie Warbler

- Blue Grosbeak

- Yellow-breasted Chat

- Baltimore Oriole

- Northern Parula

- Magnolia Warbler

- Scarlet Tanager

- American Redstart

- Eastern Wood-Pewee

- Ovenbird

- Mallard

- Common Yellowthroat

- Black-throated Blue Warbler

- Black-throated Green Warbler

- Rose-breasted Grosbeak

- Black-and-white Warbler

- Warbling Vireo

- Common Nighthawk

- Blackpoll Warbler

- Acadian Flycatcher

- Chestnut-sided Warbler

- Kentucky Warbler

- Canada Warbler

- Yellow-billed Cuckoo

- Eastern Screech-Owl

- White-eyed Vireo

- Bay-breasted Warbler

- Blackburnian Warbler

- Blue-headed Vireo

- Great Horned Owl

- Fox Sparrow

But where I focused my energy was not my yard, but my county. My county list was 163 species long:

- Canada Goose

- Turkey Vulture

- Red-shouldered Hawk

- Red-tailed Hawk

- Yellow-bellied Sapsucker

- Red-bellied Woodpecker

- Downy Woodpecker

- Northern Flicker

- Blue Jay

- American Crow

- Carolina Chickadee

- Tufted Titmouse

- Ruby-crowned Kinglet

- Golden-crowned Kinglet

- White-breasted Nuthatch

- Red-breasted Nuthatch

- Carolina Wren

- European Starling

- Eastern Bluebird

- Hermit Thrush

- American Robin

- House Finch

- Purple Finch

- American Goldfinch

- Dark-eyed Junco

- White-throated Sparrow

- Northern Cardinal

- Pileated Woodpecker

- Common Raven

- Mourning Dove

- Northern Mockingbird

- Gadwall

- Eurasian Wigeon

- American Wigeon

- Mallard

- Great Blue Heron

- Song Sparrow

- Yellow-rumped Warbler

- Fish Crow

- Chipping Sparrow

- Hairy Woodpecker

- Brown Creeper

- Cedar Waxwing

- Field Sparrow

- Black Vulture

- Northern Harrier

- Sharp-shinned Hawk

- Cooper’s Hawk

- Merlin

- Eastern Towhee

- Eastern Phoebe

- Rock Pigeon

- Eastern Screech-Owl

- Red-headed Woodpecker

- American Kestrel

- Loggerhead Shrike

- Bald Eagle

- Eastern Meadowlark

- Common Merganser

- Belted Kingfisher

- House Sparrow

- Pied-billed Grebe

- Wood Duck

- Hooded Merganser

- Wilson’s Snipe

- Bufflehead

- Winter Wren

- Fox Sparrow

- Ring-billed Gull

- Ring-necked Duck

- Ruddy Duck

- Red-winged Blackbird

- Barred Owl

- Brown-headed Cowbird

- Common Grackle

- Swamp Sparrow

- Tree Swallow

- Pine Warbler

- Horned Lark

- American Pipit

- Tundra Swan

- Killdeer

- American Woodcock

- Double-crested Cormorant

- Green-winged Teal

- American Coot

- Savannah Sparrow

- Horned Grebe

- Osprey

- Louisiana Waterthrush

- Sandhill Crane

- Brown Thrasher

- Great Horned Owl

- Rusty Blackbird

- Wild Turkey

- Blue-winged Teal

- Blue-gray Gnatcatcher

- Ruby-throated Hummingbird

- Blue-headed Vireo

- Barn Swallow

- Northern Rough-winged Swallow

- Broad-winged Hawk

- Indigo Bunting

- Grasshopper Sparrow

- Wood Thrush

- Green Heron

- Yellow-throated Vireo

- Orchard Oriole

- Palm Warbler

- Spotted Sandpiper

- Little Blue Heron

- Solitary Sandpiper

- Black-and-white Warbler

- Northern Parula

- Great Crested Flycatcher

- Red-eyed Vireo

- Gray Catbird

- Chimney Swift

- Eastern Kingbird

- White-eyed Vireo

- Yellow-breasted Chat

- Baltimore Oriole

- American Redstart

- Yellow Warbler

- Lesser Yellowlegs

- Prairie Warbler

- Blue Grosbeak

- Black-throated Blue Warbler

- Magnolia Warbler

- Scarlet Tanager

- Dunlin

- Hooded Warbler

- Eastern Wood-Pewee

- Ovenbird

- Common Yellowthroat

- Black-throated Green Warbler

- Rose-breasted Grosbeak

- Worm-eating Warbler

- Summer Tanager

- Warbling Vireo

- Common Nighthawk

- Blackpoll Warbler

- Kentucky Warbler

- Cerulean Warbler

- Bay-breasted Warbler

- Acadian Flycatcher

- Chestnut-sided Warbler

- House Wren

- Greater Scaup

- Canada Warbler

- Prothonotary Warbler

- Cliff Swallow

- Yellow-billed Cuckoo

- Roseate Spoonbill

- Swallow-tailed Kite

- Northern Bobwhite

- Great Egret

- Veery

- Blackburnian Warbler

- Gray-cheeked Thrush

- Cape May Warbler

- Western Flycatcher

- Greater Yellowlegs

Reflections: I continue to use Merlin and eBird as apps to enable my birding. I took on the role of Vice President of our local bird club, led a birding walk at Ivy Creek Natural Area, and started going out weekly with a group of dedicated birders. They are very talented, but I’ve been able to contribute some good observations to the team effort, which makes me feel good. Regardless of whether I’m with them or soloing, it gives me a special frisson of excitement when I see a really unusual or cool bird, as when I spotted a Northern Harrier feeding yesterday, or when I successfully tracked down rare birds like Roseate Spoonbill and Western Flycatcher. I don’t always find success when I go out birding, but you never know what’s out there if you don’t go take a look.

With trips to Mexico, southern California, Montana, the Outer Banks of North Carolina and the northern Pacific + Hawaii, I got 306 species globally for the year. I’ll not bother to list them all here, but I will link to the list.

For the coming year, I think my birding goals are: (1) top 5 in the county, (2) intentionally boost my Virginia list by visiting other parts of the state with different birds, and (3) backfilling my old birding lists from youthful travels into eBird, so that my official “life list” number there gets closer to reality (it’s currently 378, but the reality must surely be something more like 600).

Happy new year!

25 December 2023

The Greywacke, by Nick Davidson

Just finished a geologically focused book that the readers of this blog might be interested in. It is a history of work on delineating the early part of the Phanerozoic timescale in Wales and Scotland. The majority of the book is about Adam Sedgewick and Roderick Murchison and their close collaboration and increasing divergence and eventual total estrangement as they sought to sort out the boundaries of different periods of geologic time. Sedgewick was focused on the Cambrian, and Murchison on the Silurian, and they bickered over a the boundary in between. A certain section of strata was seen by both men as integral to “their” chosen period, and they were not able to resolve their differences prior to their deaths. Resolution only came with the meticulous work of Charles Lapworth, who sorted through the chaotically folded and faulted strata at Dobb’s Linn, near Moffat, using careful tracking of the graptolite fossils to distinguish five packages of shale which contained three graptolite faunas – the middle of which he assigned to a whole new period, the Ordovician, which dwelt in the disputed zone of overlap. Author Nick Davidson also points out that a side theater was the North West Highlands of Scotland, where Murchison and James Nicol geologized and also came to disagreement, and once again Lapworth swooped in and was able to resolve the mess, this time invoking the brand new concept of mylonite as a marker of fault surfaces (“gliding planes”).

Just finished a geologically focused book that the readers of this blog might be interested in. It is a history of work on delineating the early part of the Phanerozoic timescale in Wales and Scotland. The majority of the book is about Adam Sedgewick and Roderick Murchison and their close collaboration and increasing divergence and eventual total estrangement as they sought to sort out the boundaries of different periods of geologic time. Sedgewick was focused on the Cambrian, and Murchison on the Silurian, and they bickered over a the boundary in between. A certain section of strata was seen by both men as integral to “their” chosen period, and they were not able to resolve their differences prior to their deaths. Resolution only came with the meticulous work of Charles Lapworth, who sorted through the chaotically folded and faulted strata at Dobb’s Linn, near Moffat, using careful tracking of the graptolite fossils to distinguish five packages of shale which contained three graptolite faunas – the middle of which he assigned to a whole new period, the Ordovician, which dwelt in the disputed zone of overlap. Author Nick Davidson also points out that a side theater was the North West Highlands of Scotland, where Murchison and James Nicol geologized and also came to disagreement, and once again Lapworth swooped in and was able to resolve the mess, this time invoking the brand new concept of mylonite as a marker of fault surfaces (“gliding planes”).

Davidson is an enthusiastic writer, and this volume is a slimmer, trimmer read than David Oldroyd’s or Martin Rudwick’s volumes on similar topics. The downside is that because Davidson is not a professional geologist, some errors creep in. Because I’m personally more familiar with Scotland’s geology than the Welsh strata, I noticed these in particular during Davidson’s discussion of the Highlands. For instance, he uses “quartz” instead of “quartzite” for the basal strata in the Ardvreck Group, treating the mineral name as if it were a rock, unselfconsciously pairing it with limestone. He gets the direction of transport on the Moine Thrust backward, and illustrates the Moine showing the fault clearly cutting across bedding at a steep angle instead of being parallel to bedding, which is the circumstance that made it so tricky for Victorian geologists to sort out. Furthermore, every time “Mylonite” appears in the text, it’s capitalized. I feel like these are mistakes a professional geologist familiar with the area wouldn’t have made, although that sort of thing can also get caught during fact-checking by an assiduous, geologically-conversant editor. When goofs in basic stuff like that appears in a text, it makes me distrust the wider body of information presented.

Those critiques aside, I feel like I learned a lot more about the origin of some of the prominent divisions of the geologic timescale, and gained insight into some of the insidious issues that bedeviled the pioneers of our science. For instance, there is apparently an unconformity within one unit, the Caradoc Sandstone, that everyone missed for a long time since the strata above and below the unconformity were lithologically identical. One technique that Davidson uses in his narrative is constantly redrawing the geological timescale as a graphic, as the geologists refined their understanding and/or arrived at new interpretations. One issue that I feel never really got the full focus of the book’s attention is the discrepancy between the way we use the term “greywacke” (graywacke) today and the way Davidson indicates it was apparently used in Victorian times, as a catch-all for early Paleozoic strata, but including shales and limestones as well as sandstones. A final chapter jumps to the Heezen/Ewing insight about submarine landslides being a mechanism for depositing graywacke in the deep ocean (after the snapping of telegraph cables due to the 1929 Grand Banks earthquake turbidity current), but how this relates to the main focus of the book is never really tied off in any sort of satisfying way.

All told, a mixed bag.

1 December 2023

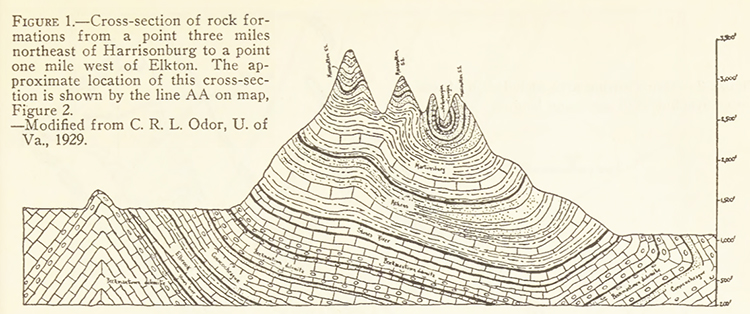

Friday fold: a Massanutten cross-section from a century ago

Happy Friday, friends!

Here’s an image I came across this week while searching for something else. It’s from a 1935 issue of The Virginia Teacher that describes the geology of the Massanutten mountain system for the benefit of students at James Madison University (then the State Teachers College at Harrisonburg) climbing the mountain on the weekends. The breezy article includes several illustrations, but I was particularly taken with this one, a modified reproduction of a 1929 sketch.

Here it is:

I’ve written a lot here about the geology of Massanutten, having lived in the depths of its differentially-eroded doubly-plunging synclinorium for 8 years. But this was the first time I’d seen this image. I like how it puts emphasis on the lower elevation of the Page Valley (right/east) relative to the Shenandoah Valley (left/west). I also note the overturned strata in the furthest east (right) position, something I’ve also documented on this blog.

The article accompanying the sketch describes the ridge forming layer as the Tuscarora (rather than the Massanutten Sandstone), which is out of vogue these days (but I think ultimately correct). In terms of interpretation and elucidation, one thing the article did really well was emphasize that though the entire Great Valley is a vast syncline in structure, and Massanutten Mountain is the deepest part of that structure, and those brings the youngest strata (Tuscarora/Massanutten) to the lowest level anywhere along the strike of that Great fold. Ironically, this results in the highest topographic elevations along the Great Valley, because of the Masscarora’s terrific resistance to weathering and erosion.

That Tuscanutten is really somethin’!

17 November 2023

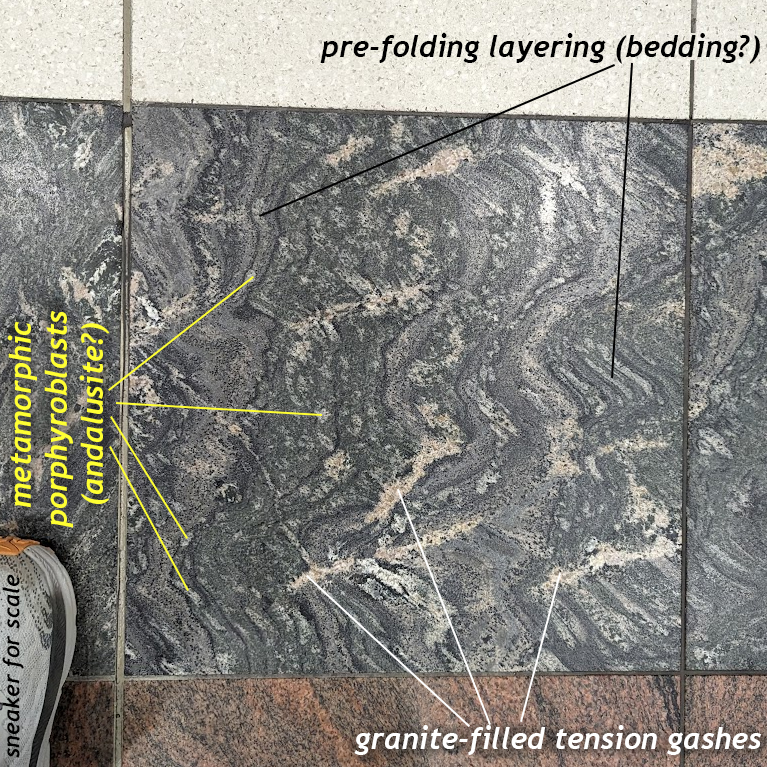

Friday fold: Floor paver in the Atlanta airport

On my way back to Virginia from Hawaii, I had a three hour layover in the Atlanta airport. There, I spotted this charismatic stone paver on on the terminal floor. It showed folds, and so I snapped a photo, but it also shows plenty more…

An annotated copy:

Happy Friday, all!

9 November 2023

Glacial geology of Discovery Park, Seattle

[Cross-posted at the STEMSEAS blog]

Prior to embarking on the R/V Thompson for the inaugural STEMseas2YC oceanographic transit, a group of my fellow two-year-college STEM faculty and I set out on a geological field trip. Seattle is glaciated territory, and the majority of the ground is underlain by glacial sediments of one sort or another. There’s surprisingly little bedrock!

Whenever I travel someplace new, I want to explore the local geology, and this brief sojourn in Seattle was no different. As soon as it was decided that I was going on the STEMSEAS cruise, I started making inquiries with Seattle-based geologists about what was worth seeing. What would have been ideal is for one of them to offer to take me out to cool outcrops, so I could just learn and enjoy. Someone else could be in charge! But that didn’t happen, so I volunteered to run an excursion myself, with the caveat that I do not know my way around. Four of my fellow STEMSEAS cruise participants indicated an interest in joining, so now I was committed. I ruled out a few locations because of distance, and another because its outcrop was underwater at high tide. And indeed, the ~6 hour window I had to run the field trip coincided with high tide. Furthermore, when I actually arrived in Seattle, it was raining, and so that constrained the logistics even further.

Whenever I travel someplace new, I want to explore the local geology, and this brief sojourn in Seattle was no different. As soon as it was decided that I was going on the STEMSEAS cruise, I started making inquiries with Seattle-based geologists about what was worth seeing. What would have been ideal is for one of them to offer to take me out to cool outcrops, so I could just learn and enjoy. Someone else could be in charge! But that didn’t happen, so I volunteered to run an excursion myself, with the caveat that I do not know my way around. Four of my fellow STEMSEAS cruise participants indicated an interest in joining, so now I was committed. I ruled out a few locations because of distance, and another because its outcrop was underwater at high tide. And indeed, the ~6 hour window I had to run the field trip coincided with high tide. Furthermore, when I actually arrived in Seattle, it was raining, and so that constrained the logistics even further.

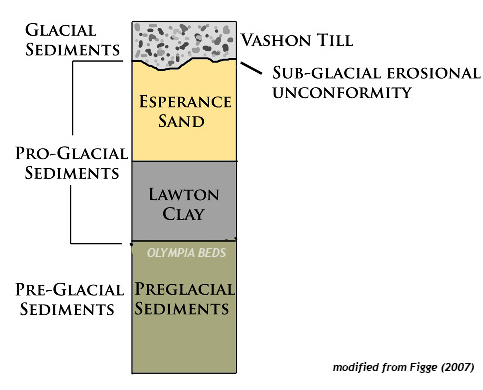

So: I settled on a 3 hour excursion to Discovery Park, a triangular promontory of land that pokes westward out into Puget Sound. What Discovery Park does well is glacial stratigraphy. I consulted several guides, most usefully J. Figge’s (2007) guide to the geology of the site.

Joining me were four of my STEMseas2YC colleagues: Rebecca Sperry (Salt Lake Community College), Kusali Gamage (Austin Community College), Karen Menge (Delgado Community College) and Tess Weathers (Chabot College). We took Lyfts to Discovery Park, and met up at the lighthouse at its westernmost point, then worked our way along the bluffs at South Beach to see the strata exposed there.

We encountered three stratigraphic units. From youngest (uppermost) to oldest (lowermost), which is the order we encountered them, they were: the Vashon Till, The Esperance Sand, and the Lawton Clay. All tell different aspects of the glacial story in Puget Sound. Further downsection (and to the southeast along the bluff) is another unit, the preglacial Olympia Beds, but we were not able to see these on our field trip due to beach access being swamped by the tide.

Honestly, I was delighted that we were able to examine three of the units in decent detail, and further pleased that they told a coherent story. The preglacial sediments of the Olympia beds record rivers and floodplains, and then these were swamped by a proglacial lake, Glacial Lake Russell, which stilled the depositional environment dramatically. The idea here is that the Puget Sound region was a lowland, into which first rivers, and then glaciers, drained. At first, the Puget Lobe of glacial ice pushed its way south into the topographic basin, blocking the shortest route to the sea. But rivers kept flowing, and so water backed up behind the dam-like obstacle of the glacial terminus. Conformably, the sedimentary sequence shifts from the Olympia Beds to the overlying Lawton Clay as water levels got deeper, and more still. In these calm waters, clay and silt were deposited, perhaps at rates up to an inch per year. These lake sediments comprise the Lawton Clay.

Overlying the Lawton Clay is a unit called the Esperance Sand. It’s orange and sandy, as the name implies. The sand is still interpreted as proglacial sediment, but now it’s a return to higher energy levels associated with prograding (advancing) outwash streams, ultimately derived from the glacial front. I didn’t get any photos of it, unfortunately. Oops.

Overlying the Esperance Sand is what really drew me to Discovery Park: a nice exposure of till. The Vashon Till was deposited directly by the Puget Lobe of the Cordilleran ice sheet. Poorly-sorted till is a signature that “glaciers used to sit right here.”

A close-up of the till’s texture:

And down on the bench just above beach level, we found a lovely granitic glacial erratic. This was presumably weathered out of the Vashon Till, and may have experienced some post-glacial spheroidal weathering. It was easily the biggest boulder we saw, about a meter across. Furthermore, a detailed look at the erratic showed the presence of microgranular mafic enclaves (MMEs) within the granite, evidence of magma mingling at depth underneath a volcanic arc in the deep time past. Later, uplift and erosion brought these mid-crustal rocks to Earth’s surface, probably in British Columbia, Canada, and then a glacier chewed into them, plucking out a massive chunk and transporting it southward. It crossed the U.S./Canada border without a passport, and was dumped along with gazillions of other sedimentary particles of every size imaginable. When wave erosion milled away at the base of the Discovery Park bluffs, blocks of till dropped down in landslides, which were then sorted through by rain and waves. Smaller particles were stripped away, and larger ones were left behind. If you infer that because of its massive size, this erratic is also quite heavy and therefore unlikely to move far by watery means alone, that suggests it’s probably not too far from where the glacier left it ~18,000 years ago! It’s just that the surrounding finer-grained sediment has been stripped away in the interim.

And down on the bench just above beach level, we found a lovely granitic glacial erratic. This was presumably weathered out of the Vashon Till, and may have experienced some post-glacial spheroidal weathering. It was easily the biggest boulder we saw, about a meter across. Furthermore, a detailed look at the erratic showed the presence of microgranular mafic enclaves (MMEs) within the granite, evidence of magma mingling at depth underneath a volcanic arc in the deep time past. Later, uplift and erosion brought these mid-crustal rocks to Earth’s surface, probably in British Columbia, Canada, and then a glacier chewed into them, plucking out a massive chunk and transporting it southward. It crossed the U.S./Canada border without a passport, and was dumped along with gazillions of other sedimentary particles of every size imaginable. When wave erosion milled away at the base of the Discovery Park bluffs, blocks of till dropped down in landslides, which were then sorted through by rain and waves. Smaller particles were stripped away, and larger ones were left behind. If you infer that because of its massive size, this erratic is also quite heavy and therefore unlikely to move far by watery means alone, that suggests it’s probably not too far from where the glacier left it ~18,000 years ago! It’s just that the surrounding finer-grained sediment has been stripped away in the interim.

As you can see from the photos above, the rain stopped and the sun shone. It was pretty windy, but we all really enjoyed the outing.

Reflecting on the field trip now, after almost a week at sea, I ponder the nature of expertise.

I’m not a Seattle-area geologist, nor a glacial geologist. But I knew enough to enthusiastically and humbly share some understanding with my colleagues, who included an oceanographer, an engineer, a geophysicist, and a cell biologist. I felt gratified that we got decent weather and that I was able to be useful in interpreting the geology of this place I’d never previously visited.

Now we are all literally at sea, and that means I am figuratively at sea. I’m learning so much about stuff I haven’t thought about for a long time, or in same cases, ever. For instance, I’m working on a project focused on plankton, but I don’t know my globeriginid forams from my cyclopoid copepods. There’s a steep learning curve, but it’s fascinating. It’s humbling and grounding to realize how much I don’t know about seafloor topography, oceanic circulation, the physical characteristics of seawater, and the tools we use to explore the vast realm of Earth’s oceans.

One of the great strengths of the STEMSEAS program is that its goal is to help nurture student growth in the unique setting of an oceanographic vessel. The program helps build science identity in students by giving them learning experiences, but embracing the fact that none of us knows it all. Oceanography applies so many different scientific disciplines to stretching our understanding of the ocean. STEMSEAS students learn and grow, and I think that’s true for STEMSEAS mentors, too!

Resources:

Ralph Dawes’ website on Discovery Park

J. Figge (2007) guide to the geology of Discovery Park

7 November 2023

Near-empty seas, near-empty air

[Cross-posted at the STEMSEAS blog]

I had one of my students ask me this morning about what was the most interesting thing I’ve learned on this expedition. This is my first time going to sea out in the open ocean, out into the High Seas. All my previous time aboard oceangoing vessels was in the coastal waters of some landmass (Alaska’s Inside Passage, the Chiloé Archipelago of Chile, the Galápagos). Actually, come to think of it now that I list those out, they’re all eastern Pacific locations, more or less butted up against the western shores of the Americas. These locations are zones of oceanic upwelling, where cold, nutrient-rich waters from the deep ocean are tugged upward as winds blow surface waters offshore.

Those coastal waters are famously rich fisheries, a major “hunting ground” for marine protein to feed humans. But they are also the “hunting ground” of countless marine species, from great white sharks and turtles to whales and seals. And birds.

I’m a birder, so one of my side motivations in joining the STEMseas2YC transit cruise aboard the R/V Thompson was to see what sorts of birds I might encounter out in the way-offshore Pacific ocean.

Here’s what I saw yesterday:

In an hour of standing on deck and watching, I saw five individuals of one species: the Black-footed albatross.

On one level, that’s so super awesome it makes me squeal with glee – this is a “lifer” species for me, and I’m thrilled to see it, in a place where so, so few people get to go.

On the other hand: I saw five individuals of one species.

That’s it.

Five total birds.

I find this paucity of species quite striking. It’s as striking as the deep, vivid blue of the sea here, or the fact that in every direction we look, there’s nothing – no land, no ships, nothing but waves and water. Below me is five kilometers of ocean – and to a first approximation, it’s basically empty. It’s shockingly devoid of life.

Being a scientific research vessel, the R/V Thompson is equipped with a nifty flow-through seawater sampling system. Using this, we can easily pull off an aliquot of local seawater and then look at it under the microscope to see what sorts of plankton inhabit these waters. We have been surprised (and maybe a little disappointed) to see that the plankton too are quite sparse. Our procedure is to run seawater through an 80-micron sieve for half an hour, then look at the final few cubic centimeters of water and then search and search those drops for signs of life.

Here are a few I photographed:

A few copepods, some diatoms, a handful of spiky radiolarians — these were noted, but only after a dozen science professors spent an hour scouring the seawater samples in earnest. The water is pretty empty of all life, not just birds. It’s “oligotrophic” in oceanography-speak.

Without many nutrients, there’s not much plankton, and so there aren’t many fish, and so there’s not much for my beloved birds to eat. It’s sparse out here… and the albatrosses keep cruising and cruising…

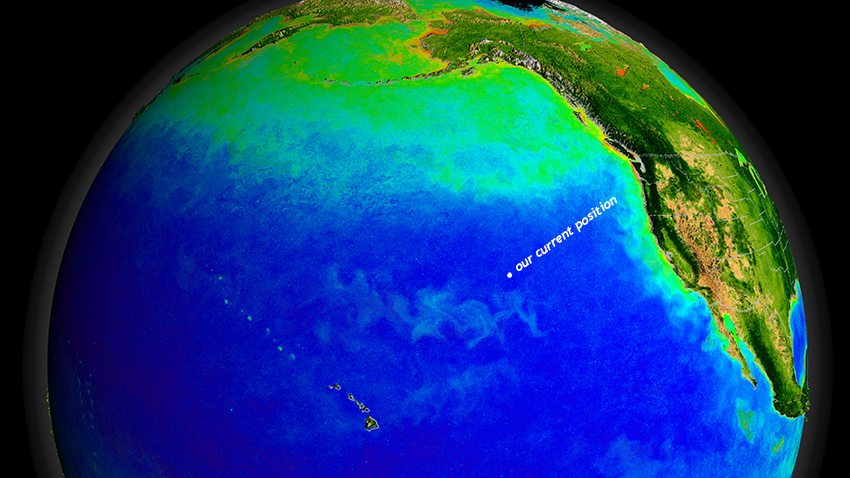

Here’s a NASA image showing primary productivity in the North Pacific, where warmer colors indicate more chlorophyll, and thus phytoplankton, the base of the flow of calories through the oceanic ecosystem, the food source for zooplankton and all the other heterotrophs (“eaters”) further up the food chain… Deep blue shows the absence of chlorophyll, and that’s where we are right now:

NASA/Goddard Space Flight Center, The SeaWiFS Project and GeoEye, Scientific Visualization Studio

So, so much of the Pacific is a “marine desert,” lacking in nutrients and thus in life.

And the Thompson is out in the middle of all that nothing, motoring at 12½ knots day and night, through the near-boundless saltwater, eyes peeled for signs of life…

3 November 2023

Friday fold: Neoproterozoic rhyolite (of the Konnarock Formation?)

Happy Friday!

Here’s a cool rock I saw last weekend on the 2023 Virginia Geological Field Conference. This is a flow-banded rhyolite from the Mount Rogers area of Virginia (near the North Carolina border in the highest part of the Blue Ridge province). The folding you see here is probably primary (lava flow dynamics) rather than tectonic (deformation associated with Appalachian mountain building).

This particular block was in an intriguing position – surrounded by laminated sedimentary layers (some with dropstones) of the Neoproterozoic Konnarock Formation. If that positioning is itself conformable and primary, then the age of this rhyolite constrains the age of the Konnarock Formation’s deposition, an interpretation that was argued in an exciting 2020 Science paper by Scott MacLennan and others. The exact relationship of this rhyolite to the Konnarock is the key to their conclusion that there was glaciation in the Mount Rogers area tens of millions of years prior to the first Snowball Earth, and thus global climate was already cold prior to the onset of the planetary-scale “Snowball.” However, having now visited the site in person, I was sad that the exact contact between the two was not especially well exposed. There’s an interval of shrubs and bushes that obscures the exact relationship between the two units. I think it’s still plausible that this rhyolite may have been a nubbin of bedrock poking up into the lake which deposited the Konnarock, and therefore its 751 Ma age is an age for the rocks underlying the Konnarock instead: this would mean the Konnarock is indeed a Snowball Earth deposit (though a lacustrine one rather than marine — with no cap carbonate). (There’s also a possibility that Alleghanian-age faults have shuffled the stratigraphic deck here a bit, placing older rhyolite atop younger glaciogenic sediments.)

Anyhow – great rocks, beautiful and intriguing. The VGFC was fortunate to enjoy glorious weather. A good time was had by all.

1 November 2023

Four new videos about east coast geology

Hi all! I’ve been putting my energy into video production more than blogging lately, but let me share some of that work with you:

15 September 2023

Friday fold: Candler Formation

On a birding hike yesterday morning, I found this:

This is a little slab of kinked phyllite of the Candler Formation. This metamorphic rock started as mud (and ash) deposited atop the Catoctin Formation, and it was later squeezed and heated during Appalachian mountain-building, encouraging the growth of micas at the expense of clay. A strong differential stress made sure those micas grew in one preferred orientation, and that resulted in a foliation. Later, that foliation was squeezed from a new direction, and because it has a strong mechanical layering, it deformed under the new stress by kinking. Held at an angle to the (diffuse) sunlight, the different orientations to the foliation in the kinked and not-kinked parts of the sample reflect the light differently. This creates the appearance of light/dark stripes running through the sample in a still photograph, but those are all the same stuff, just in different orientations relative to the incoming light.

Where have you seen folded rocks lately?

Happy Friday!

Callan Bentley is Associate Professor of Geology at Piedmont Virginia Community College in Charlottesville, Virginia. He is a Fellow of the Geological Society of America. For his work on this blog, the National Association of Geoscience Teachers recognized him with the James Shea Award. He has also won the Outstanding Faculty Award from the State Council on Higher Education in Virginia, and the Biggs Award for Excellence in Geoscience Teaching from the Geoscience Education Division of the Geological Society of America. In previous years, Callan served as a contributing editor at EARTH magazine, President of the Geological Society of Washington and President the Geo2YC division of NAGT.

Callan Bentley is Associate Professor of Geology at Piedmont Virginia Community College in Charlottesville, Virginia. He is a Fellow of the Geological Society of America. For his work on this blog, the National Association of Geoscience Teachers recognized him with the James Shea Award. He has also won the Outstanding Faculty Award from the State Council on Higher Education in Virginia, and the Biggs Award for Excellence in Geoscience Teaching from the Geoscience Education Division of the Geological Society of America. In previous years, Callan served as a contributing editor at EARTH magazine, President of the Geological Society of Washington and President the Geo2YC division of NAGT.