25 August 2016

Six stats tips for science communicators.

Posted by Shane Hanlon

By Brendan Bane

Attentive science journalists. Photo credit -Lauren Lipuma

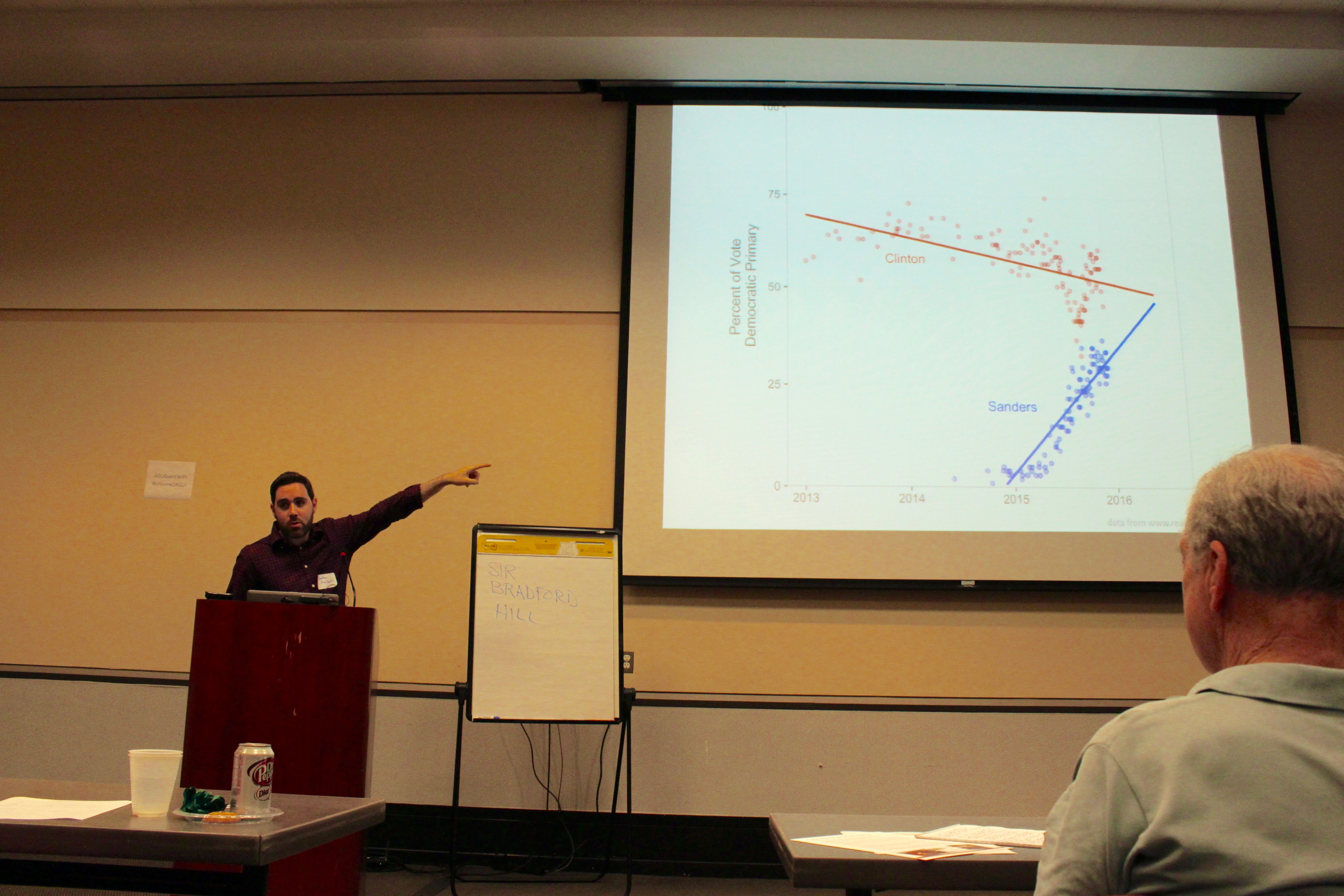

As a courtesy to Washington DC-based and visiting journalists, AGU recently invited reporters and researchers to gather, eat, drink, and discuss a sometimes daunting subject: statistics. On Thursday, August 11, AGU partnered with STATS.org, Sense About Science USA, and the DC Science Writers Association to host a workshop on interpreting data through statistics.

Statisticians Regina Nuzzo of Gallaudet University and Jonathan Auerbach of Columbia University led the workshop, sharing several tips on how best to interview researchers about the statistical significance of their findings. We’ve compiled a list of those tips below.

How to interpret p-values.

To think clearly about p-values, Nuzzo encouraged the audience to think of the number as a “surprise index.” Remember, p-values indicate the likelihood of seeing the researcher’s results, assuming their null hypothesis was true. The p-value addresses the question: are the results unusual? In Nuzzo’s words, ‘should you feel surprised?’

If the p-value in question is greater than 0.05, then don’t feel surprised. That’s exactly the result you’d expect from chance. If the value is less than 0.05, however, then it’s unlikely you’d see the same results produced by chance.

Beware of p-hacking.

Researchers have to cut their data off at some point. It might be overkill, for example, to consider the past 1,400 years of climate data when looking at a recent change in local precipitation levels. Because they can’t consider everything, researchers have to decide where to truncate their data.

Nuzzo encouraged the audience to ask scientists why they chose their cutoff point. Because data can be manipulated to produce statistical significance or low p-values, a practice known as “p-hacking” or “data dredging,” reporters must be wary. By simply asking about their reasoning, you can get a better sense of how the researcher framed their study.

Dr. Regina Nuzzo w/ an engaged audience. Photo credit – Lauren Lipuma

Ask for multiple lines of evidence.

Statistical relationships alone do not establish a phenomenon’s existence, as shown by Tyler Vigen’s Spurious Correlations. To counter for this, Nuzzo suggested asking for multiple lines of evidence in addition to any statistical results. What reasons did your researcher have to explore a statistical relationship in the first place? Did they propose a mechanism to explain their results? By asking the researcher which lines of evidence best support their findings, you can build or detract confidence in their results.

Ask about model validation.

If your researchers developed a model, ask them how it was validated. Imagine you’re interviewing a seismologist about a model they devised to assess relationships between earthquakes in a fault network. To test the model’s efficacy, the researchers could use historical measurements to predict earthquakes that have already happened.

Ask about registered reports.

Registered reports offer an alternative to the conventional publishing model. Instead of accepting a completed study for review, some journals offer researchers the chance to submit their proposed study before they even collect any data. If the journal accepts the proposed analysis, the results will almost always be published, despite their statistical significance. By nearly guaranteeing publication, journals can remove the incentive for data manipulation. Be sure to ask your researcher if they filed a registered report.

Jonathan Auerbach relating to the DC audience. Photo credit – Lauren Lipuma

If you need help, just ask.

If you’re poring over statistics that are especially perplexing, consider reaching out to stats.org. They offer advice to journalists who have questions on specific studies, or to communicators who just want to talk math. Send your questions here, and a statistician should respond shortly.

-Brendan Bane is an intern at AGU’s Public Information department. Follow him on Twitter @brendan_bane.

The Plainspoken Scientist is the science communication blog of AGU’s Sharing Science program. With this blog, we wish to showcase creative and effective science communication via multiple mediums and modes.

The Plainspoken Scientist is the science communication blog of AGU’s Sharing Science program. With this blog, we wish to showcase creative and effective science communication via multiple mediums and modes.