21 February 2017

Q&A, episode 3

Posted by Callan Bentley

Time for another episode of “you ask the questions”… Episode 1 here; Episode 2 here.

Remember I have a Google Form to allow anyone to submit questions anonymously. Silly questions are fine, like last week’s one about walking on the Sun. Even if it’s a goofy notion, I might be able to make an interesting response. My goal is to answer a couple of questions per week. This week, I only got to one. But it’s a meaty one! Sink your teeth in:

5. A possible new continent was discovered. Article in Nature here (Caution, scary science). It sounds like this discovery hinges on determining the ages of rocks. I know about Carbon Dating (and other isotopes), but that relies on an organism taking up exogenous carbon until it dies. How do you date rocks, particularly when you can’t trust that they’re “static” in the crust?

First off: I appreciate the detail in this question, and the citation of sources!

This is a neat finding that changes the way we view a particular spot. It’s an example of a small thing — literal atoms in a literal grain of sand — that implies a big thing — “a continent!” Much of geology advances this way – but studying small details, and then reflecting on what those tiny bits imply about the very biggest picture.

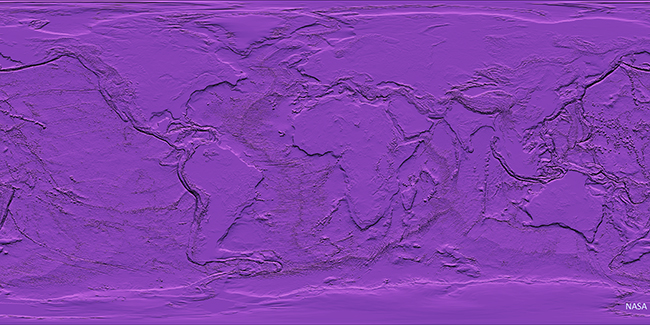

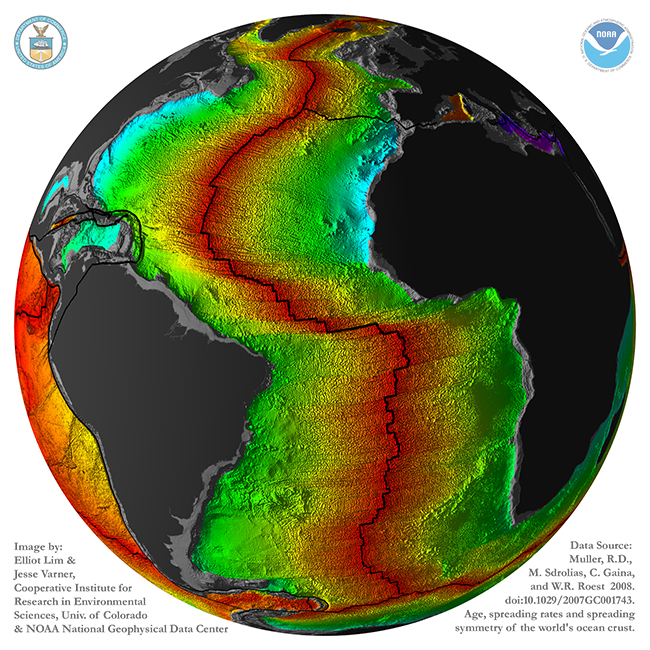

So let’s start with the big picture: if you were an extraterrestrial coming to planet Earth for the first time, you’d likely be struck by two observations: (1) the presence of life, and (2) a stark dichotomy in the appearance of the crust. About 2/3 of the planet is covered by a relatively thin, dark, dense kind of crust (basalt and gabbro are the main rock types), while the other third is a thick, buoyant, light-colored kind of crust (granodiorite and gneiss are the main rock types). When you look at an image of our planet with the ocean stripped away and all the land cover color variation zeroed out, it’s really stark at how bifurcated our crust is. Put on your alien googles and take a look:

This image, by Lim and Varner (based on data in Muller, et al., 2008) shows it in a different way: gray is continental crust, rainbow colors show oceanic crust (with red = young, and blue/purple = old):

Note that continental crust doesn’t correspond one-for-one with the continental landmasses as we know them. Parts of the continents are submerged below sea level: southeast of Argentina, for instance, or Canada’s Hudson Bay, or the Arafrura Sea between Australia and New Guinea. Also, there are islands like Madagascar, Greenland, and Cuba that are made of continental crust, but are not “continents” in their own right. And just because it’s an island, that doesn’t make it continental crust: Hawaii, Iceland, and the Kerguelen Plateau are all examples of thick piles of basalt.

Not only is there this stark difference in the shape of the outermost rocky layer of the Earth, but there’s a stark age difference, too. Oceanic crust is constantly being generated and then recycled. It forms at oceanic ridges, and is destroyed at subduction zones. Oceanic crust is ephemeral, on the geologic timescale. It’s temporary. The oldest oceanic crust on the planet is only 200 million years old. In contrast, the buoyant rafts of continental crust resist being subducted, and when “matched” against oceanic crust, will override it, “winning” (surviving) every time. It persists. The oldest continental crust is over 4000 million years old. Its average age is younger, but there’s still an incredible discrepancy between their ages: the continental crust is on average an order of magnitude older than the oceanic crust.

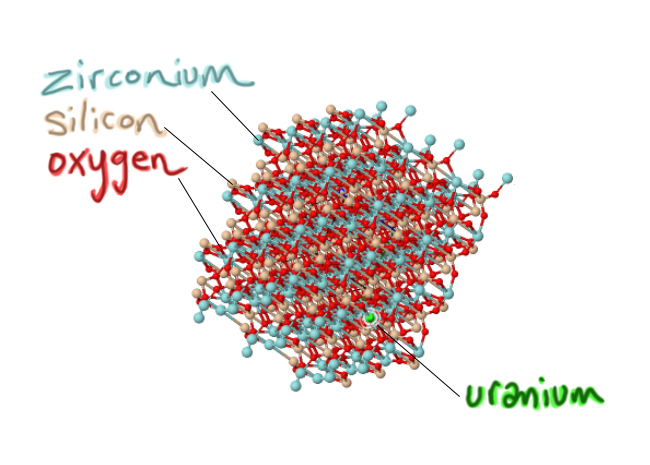

Now that we’ve established that big picture, let’s consider the small picture: the zircon crystals that are key to this study. Zircon is a mineral that is made from three elements: oxygen and silicon (O and Si, which is what most of the crust is made of), plus this less common atom called zirconium (Zr). The chemical formula is ZrSiO4.

Here’s how those atoms get put together to make a zircon crystal in an interactive JSmol 3D model: Blue is zirconium, tan is silicon, and red is oxygen. Grab it and give it a wiggle:

Mindat.org model

Zircons are really, really useful in a range of different kinds of studies. The reasons for this are:

1) They are hard. On the Mohs scale, they rank a 7.5, which is harder than quartz.

2) They are stable (at chemical equilibrium) over a wide range of conditions. This means they don’t melt too easily, they don’t recrystallize too easily, and they don’t “rot” too easily. So a zircon can form in a magma that is cooling to make an igneous rock, then be uplifted to the surface unchanged, then be weathered out of that rock and tumble down a stream into a river and be dumped on a beach, and it’s still the same old mineral. This might not sound particularly shocking to the average person, but it’s a big deal. Many other minerals will rot away or change their shape if they get squeezed, or start vibrating their atomic bonds if they get hot, letting impurities out that don’t mesh with their crystal structure. (Now, why should that last bit matter? Read on!)

3) Very important: they are a little bit impure. One key impurity is uranium. In zircon’s crystal structure, atoms of the element uranium can substitute for the element zirconium. As the crystal is forming (growing atom by atom in a magma, surrounding by as-yet-unbonded atoms), it will add oxygens and silicons and zirconiums in their appropriate place as it grows, but if a uranium shows up, the growing crystal structure can cope with sticking that uranium atom where a zirconium “ought” to be. At the same time, other random atoms that show up are ‘rejected,’ because chemically they don’t fit in the growing zircon crystal. Lithium? Neon? Gold? The growing zircon crystal sneers: Take a hike. Aluminum? Lead? Carbon? Get lost. But if uranium shows up, knocking on the molecular door? Well, come on in! So a critical handful of zirconiums give up their places, substituted for by uranium:

Modified from screenshot of a Mindat.org model

Modified from screenshot of a Mindat.org model

The result of this strong selection is that the final zircon crystal carries a load of uranium ‘special guests’ who are now deep inside its lattice. But the uraniums don’t last. Uranium is radioactive, meaning that over time, some of those atoms will fall apart, spontaneously. The forces that hold the atom’s nucleus together can’t cope with all those neutrons and protons, and so eventually, it goes ka-pow. The uranium splits into a couple of pieces, releasing energy. It’s not uranium any more. Now the bigger piece of the leftovers is a new element. Eventually, you end up with a growing accumulation of atoms of a non-radioactive element that is produced by the radioactive decay of uranium. That’s lead (Pb). The lead is not “happy” inside the zircon crystal lattice where it suddenly finds itself. Remember, lead was actively rejected by the young, growing crystal. Chemically, the lead doesn’t belong – it’s more like a stowaway than a special guest. Geologists can compare a zircon crystal’s proportion of ‘special guest’ uranium atoms to its load of ‘stowaway’ lead atoms, and that ratio, compared to the time it takes for uranium to fall apart and yield lead (its half-life) makes it possible to figure out when the crystal first formed. Here’s a video I made (4 minutes) explaining the process: Radioactive decay and isotopic dating of rocks and minerals. The astonishing thing about this is we are talking about a trace impurity within a mineral crystal that is the size of a grain of sand. It is often literally a grain of sand! And it can tell us something really profound: the numerical age of that grain, its “birthday” in an ancient magma chamber.

So the general answer to the question is that: you must have (1) a mineral which takes in a radioactive parent isotope, but (2) actively excludes the daughter isotope as it is forming. Then (3) any daughter isotope produced through the radioactive breakdown of its parent needs to then be “imprisoned” in the mineral crystal. Zircons pull this trick with uranium (parent) and lead (daughter). Other minerals like monazite can do the same thing. But the vast, vast majority of rock forming-minerals are worthless for isotopic dating, either because (1) they don’t have a spot in their crystal lattice for the radioactive parent isotope, or (2) they let plenty of daughter isotope into the crystal as its forming, so you can’t trust the measurement of daughter isotope to reflect the time since the mineral formed – who knows how much of it is part of the original load? or (3) maybe the mineral crystal cannot hang on to daughter product that is produced through radioactive decay. Minerals that “leak” their daughter isotope load will be worthless as isotopic ‘clocks.’ Any one of those conditions would rule out the use of that [mineral + isotope] system as a tool for geologic dating.

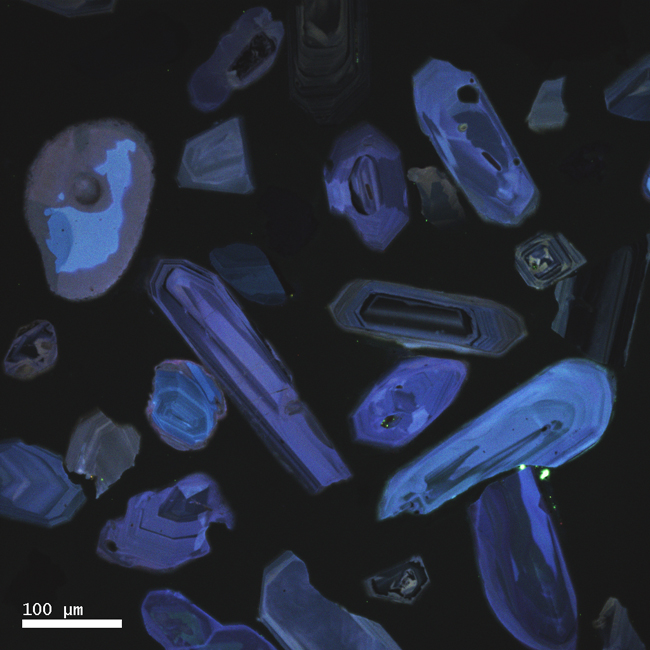

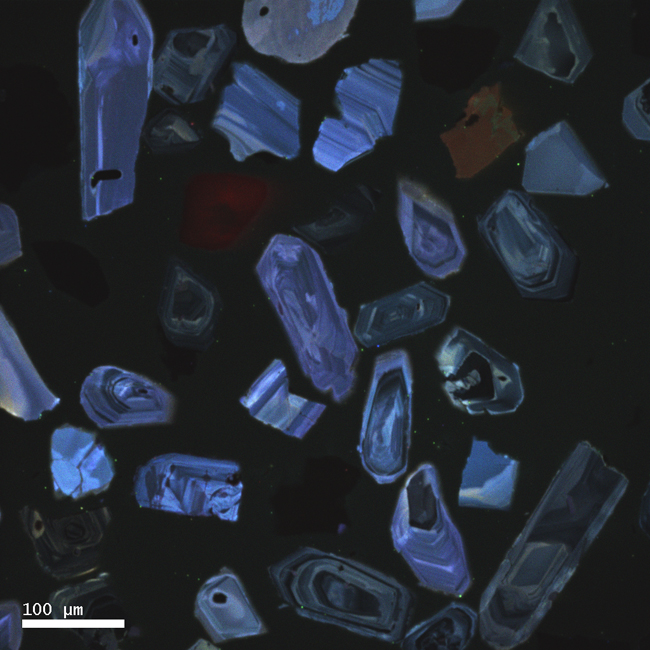

Not only that, but zircons are often zoned: meaning that you can see their history of growth preserved inside them, like the rings in a tree stump. Take a look at these two images for examples. They are produced using a special kind of microscopy called cathodoluminescence that makes the zoning really clear:

Courtesy of Karl Lang

Courtesy of Karl Lang

Courtesy of Karl Lang

Courtesy of Karl Lang

The neat things about this is that this is a kind of microscopic stratigraphy – the oldest parts of the crystal are in the middle, and the younger “layers” are outboard of that. We actually have instruments precise enough to date different parts of a single zircon crystal, and to be able to say (for instance) “the core of this zircon grew 1.2 billion years ago, then it added some layers around 360 million years ago.” When you weave in insights that can be gained from the study of the textures and structures in the crystal, you can make more profound statements still, stuff like “the core of this zircon grew 1.2 billion years ago in a magma chamber, then it was uplifted to the surface and tumbled down a river to a beach, then it was partially melted, then it was metamorphosed around 360 million years ago.” All that – from a grain of sand!

So where does that leave us with the paper the questioner asked about by the questioner, the new one from Lewis Ashwal and colleagues (Ashwal, et al., 2017)? It details dating work on zircons from the island of Mauritius (former home of the extinct giant flightless pigeon called the dodo) in the Indian Ocean, east of Madagascar.

Here’s Figure 1 from the paper:

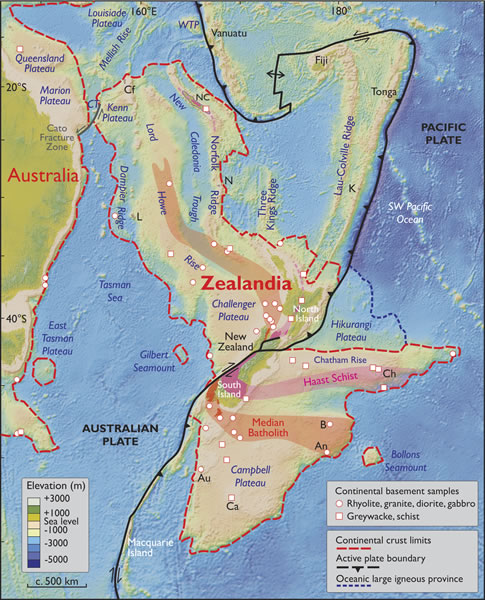

I’ll decode that for you, at least insofar as it’s relevant to this discussion. Southwestern India and eastern Madagascar share similar geology, and are interpreted to have once been adjacent. As they broke up, some chunks of continental crust slipped into the gap between them, swathed in basalt from the seafloor spreading that generated new oceanic crust between the two separating ‘continents.’ Imagine tearing a loaf of rye bread in half, and pouring brownie batter into the gap between the halves. Some bread crumbs and rye seeds may fall into the gap, and be draped in chocolate. The island of Mauritius, marked on the map with a circled “M,” is interpreted to be atop one of these crumbs. The rye seeds are its zircons, which don’t match up with the volcanic “brownie” that surrounds them.

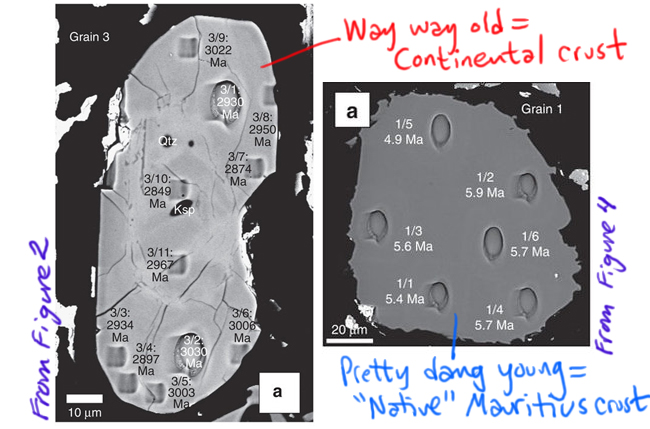

This study looked at 13 zircon crystals extracted from a sample of trachyte (which is a quartz-poor volcanic rock) from Mauritius. Ten of them had young ages (~5 million years old), which is the sort of thing you would expect for a volcanic island in the middle of oceanic crust. But three of them had much older ages, from 2500 to 3000 million years old (depending on what part of the crystal you analyzed). Take a look at two of the grains, one example from each group, each shot full of holes from the ion beam that was used to measure the uranium and lead isotopes to get the ages, annotated by me from Figures 2 and 4 in the Ashwal, et al. (2017) paper.

[decoding a few things in that figure: Ma stands for “mega-annum,” the Latin for “millions of years ago”; μm means micrometer, or micron, a unit of distance that is one-billionth of a meter. Qtz and Ksp are abbreviations for small chunks of non-zircon minerals, quartz and potassium feldspar, that are included within the zircon crystal.]

The age discrepancy is unexpected if Mauritius were 100% oceanic crust. The three old grains are 600 times older than the rock of Mauritius generally is. Those astonishingly old dates fall in the Archean eon of geologic time, and specifically within the last part of that eon, the Neoarchean era. Note the position of Mauritius on the map above, and notice the adjacent bands of light blue in both Madagascar and Indian: these blue zones are Neoarchean rocks, with similar ages. So that all lines up really nicely. It suggests those continental crust rocks were the source of the zircons that somehow can incorporated into the much, much, much younger rocks from Mauritius’ ~5 million year old volcanic trachyte.

So there is strong evidence for some continental crust under Mauritius, in the form of these three sand-sized grains of zircon, extracted from a single sample of trachyte rock. The fact that, though tiny, these crystals exist, is a signal that there is more going on beneath Mauritius than the usual oceanic crust ± mantle plume. But is this enough evidence to posit “Mauritia” as its own “continent”? I’m not convinced that claim is warranted. The world is a messy place, and while these grains demonstrate that there must be some Archean crustal material being “tapped into” beneath the volcanoes of this island, I can think of ways of doing that without invoking a whole new “continent.” That said, the proportion of Neoarchean zircons in the study (three out of 13, or ~23% of the grains dated) suggests that the proportion of continental crustal material might be pretty significant. What would a second sample of rock tell us? How would the story change when we hear the testimony of another dozen zircons? In summary: This study documents a tantalizing finding, and if I were in the business of dating zircons, it would motivate me to scour Mauritius and neighboring islands and neighboring seafloor for evidence that might corroborate this interpretation (or negate it entirely).

Here’s something apropos: As I was preparing this blog post, another study was announced, with much the same “hidden continent” implications: this time it’s in the Pacific Ocean, and the “continent” is dubbed Zealandia. ‘Tis the season for discovering lost continents, it would seem!

Here’s figure 2, the map, from that paper (Mortimer, et al., 2017):

In arguing for Zealandia as a continent, Nick Mortimer and his colleagues have more to work with than three grains of zircon. Their case is built on the thick crust surrounding New Zealand and New Caledonia, as well as geology: bands of rocks like granite, rhyolite, graywacke, schist, and gneiss, arranged in what they interpret to be orogenic belts and rocks like limestone, quartzite, marking out what they interpret as sedimentary basins puddled on top of the continental crust “basement.” Orogenic belts are basically mountain belts (places where plates have collided and built mountains) where the topographic peaks of the mountains have been ground down by erosion and “leveled off.” To my mind, that’s a pretty compelling case for there being a big piece of continental crust in “Zealandia” as opposed to “Mauritia.”

________________________________________

CITATIONS

Ashwal, Lewis D., Wiedenbeck, Michael, and Torsvik, Trond H. 2017. Archaean zircons in Miocene oceanic hotspot rocks establish ancient continental crust beneath Mauritius. Nature Communications 8, article #14086. doi: http://dx.doi.org/10.1038/ncomms14086

Nick Mortimer, Hamish J. Campbell, Andy J. Tulloch, Peter R. King, Vaughan M. Stagpoole, Ray A. Wood, Mark S. Rattenbury, Rupert Sutherland, Chris J. Adams, Julien Collot, and Maria Seton, 2017. Zealandia: Earth’s Hidden Continent. GSA Today, March/April 2017. doi: 10.1130/GSATG321A.1

Müller, R.D., M. Sdrolias, C. Gaina, and W.R. Roest, 2008. Age, spreading rates and spreading symmetry of the world’s ocean crust, Geochem. Geophys. Geosyst., 9, Q04006, doi:10.1029/2007GC001743.

Trond H. Torsvik, Hans Amundsen, Ebbe H. Hartz, Fernando Corfu, Nick Kusznir, Carmen Gaina, Pavel V. Doubrovine, Bernhard Steinberger, Lewis D. Ashwal, and Bjørn Jamtveit, 2013. A Precambrian microcontinent in the Indian Ocean. Nature Geoscience 6, p. 223–227. doi:10.1038/ngeo1736

Callan Bentley is Associate Professor of Geology at Piedmont Virginia Community College in Charlottesville, Virginia. He is a Fellow of the Geological Society of America. For his work on this blog, the National Association of Geoscience Teachers recognized him with the James Shea Award. He has also won the Outstanding Faculty Award from the State Council on Higher Education in Virginia, and the Biggs Award for Excellence in Geoscience Teaching from the Geoscience Education Division of the Geological Society of America. In previous years, Callan served as a contributing editor at EARTH magazine, President of the Geological Society of Washington and President the Geo2YC division of NAGT.

Callan Bentley is Associate Professor of Geology at Piedmont Virginia Community College in Charlottesville, Virginia. He is a Fellow of the Geological Society of America. For his work on this blog, the National Association of Geoscience Teachers recognized him with the James Shea Award. He has also won the Outstanding Faculty Award from the State Council on Higher Education in Virginia, and the Biggs Award for Excellence in Geoscience Teaching from the Geoscience Education Division of the Geological Society of America. In previous years, Callan served as a contributing editor at EARTH magazine, President of the Geological Society of Washington and President the Geo2YC division of NAGT.